California Prepares for Landmark Medicaid Work Requirements Amid Growing Budget Deficits and Federal Policy Shifts

The State of California, home to the nation’s largest Medicaid program, is entering a period of significant administrative and fiscal transformation as it prepares to implement federally mandated work requirements for its Medi-Cal enrollees. Under the 2025 federal reconciliation law, states must begin conditioning Medicaid eligibility for adults in the Affordable Care Act (ACA) expansion group on meeting specific employment or community engagement criteria starting January 1, 2027. This mandate also extends to enrollees in partial expansion waiver programs, such as those in Georgia and Wisconsin, but the scale of the impact in California is unprecedented. With nearly 15 million residents enrolled in Medi-Cal, the state faces a complex intersection of system overhauls, shifting federal funding landscapes, and a deteriorating state budget that complicates the path toward compliance.

The Fiscal Landscape: A Growing Structural Deficit

California’s preparation for the 2027 work requirement deadline is occurring against a backdrop of increasing fiscal volatility. After several years of record-breaking revenue growth following the initial pandemic-induced economic downturn, the state is now grappling with a tenuous fiscal climate. Governor Gavin Newsom’s recent budget projections highlight a $3 billion structural deficit for fiscal year (FY) 2027, a figure that is expected to balloon to $22 billion by FY 2028. This projected shortfall is driven by a combination of slowing revenue growth and a steady rise in expenditures for essential services, including education, disaster response, and healthcare.

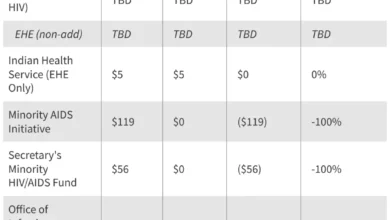

The 2025 reconciliation law plays a central role in this budgetary strain. The Governor’s office estimates that the federal law will impose costs of approximately $1.4 billion on the state’s General Fund in FY 2027 alone, with $1.1 billion specifically attributed to changes in the Medicaid program. While the state anticipates some reduction in spending due to the projected disenrollment of individuals who fail to meet the new requirements, these savings are expected to be offset by the administrative costs of implementation and changes to federal financing mechanisms, such as the managed care tax and the hospital quality assurance fee.

To mitigate these pressures, California has already begun implementing cost-saving measures within the Medi-Cal program. In FY 2026, the state restored the asset test for seniors and persons with disabilities, ended coverage for GLP-1 weight-loss medications, and terminated supplemental payments for dental services. Furthermore, the state has moved to restrict its state-funded health program for immigrant adults who would otherwise qualify for Medicaid. These restrictions include pausing new enrollments, introducing cost-sharing measures, and reducing payments to community health centers.

Chronology of Implementation and Federal Mandates

The transition toward work requirements follows a strict timeline established by federal authorities, beginning with the passage of the 2025 reconciliation law.

- January 2025: Passage of the federal reconciliation law, establishing the framework for work requirements.

- June 2025: California reports 14.8 million Medi-Cal enrollees, identifying the scope of the potential impact.

- Fiscal Year 2026: California implements initial Medicaid spending cuts to address revenue volatility.

- December 2025: Local efforts begin as voters in specific California counties approve tax measures to backfill anticipated federal healthcare funding gaps.

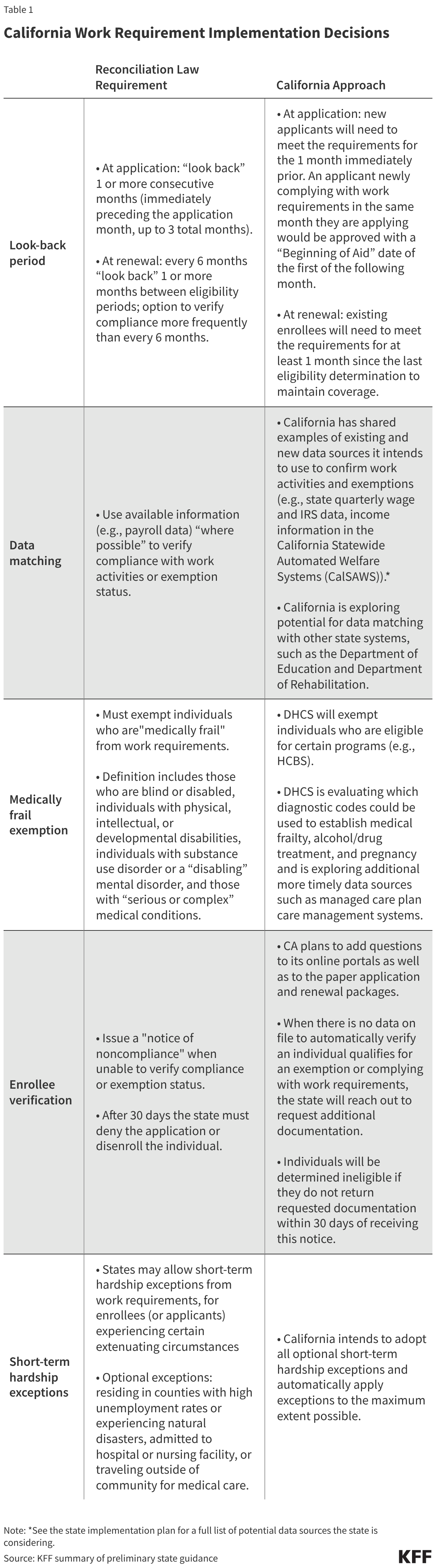

- October 2026: The mandatory three-month outreach period begins, requiring the state to notify enrollees of the upcoming requirements via mail and other communication channels.

- January 1, 2027: Official commencement of Medicaid work requirements and the first compliance "look-back" periods.

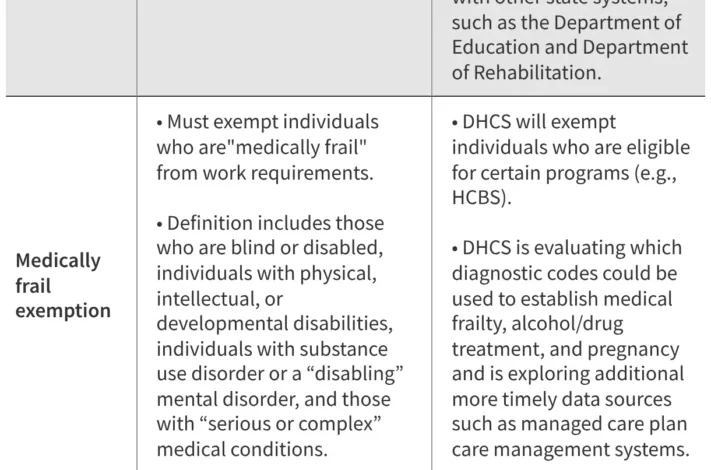

As the state moves through this timeline, officials are awaiting further federal guidance on critical definitions, such as the criteria for "medically frail" exemptions and the acceptable forms of self-attestation for work hours. The lack of finalized federal standards has created a period of administrative uncertainty for state planners.

Analyzing the Impact: Enrollment and Workforce Data

The implementation of work requirements is expected to trigger a significant shift in Medi-Cal enrollment. KFF analysis indicates that as of mid-2025, approximately five million expansion enrollees in California could be subject to the new rules. However, data suggests that a majority of this population is already engaged in the workforce or education. Approximately 63% of Medicaid adults without dependent children in the state currently work at least 80 hours per month or are enrolled in school.

Despite the high percentage of working enrollees, the administrative burden of verifying compliance remains a primary concern. California state officials estimate that between the start of implementation and the end of the reconciliation law’s transition period, up to 1.4 million individuals could be disenrolled from Medi-Cal. Many of these losses are expected to be "procedural" rather than substantive—meaning individuals who are eligible and working may lose coverage simply because they fail to navigate the complex reporting and documentation requirements.

Data from December 2025 illustrates the potential for procedural hurdles. While California has a relatively high "ex parte" renewal rate—where the state verifies eligibility automatically using existing data sources—nearly 92% of all disenrollments in late 2025 were due to procedural reasons. This rate is significantly higher than the national average of 78%, signaling that the state’s systems may struggle with the added manual workload required to track work hours and exemptions for millions of residents.

Official Responses and Administrative Strategy

The California Department of Health Care Services (DHCS) and the Governor’s office have outlined a multi-faceted strategy to manage the transition. Central to this plan is the Medicaid Advisory Committee (MAC), which includes enrollees, advocates, and healthcare providers. In recent MAC meetings, state officials emphasized the importance of a robust outreach and communication plan to prevent mass disenrollment.

The Governor’s proposed budget includes $4 million specifically allocated for "navigators"—trained professionals who assist enrollees with eligibility, enrollment, and retention. These navigators will be tasked with educating the public on what constitutes a work activity and how to report hours to the state. The outreach plan involves multiple touchpoints, including mailers, digital notifications, and coordination with managed care plans and local providers.

In addition to state-level efforts, local governments are beginning to take independent action. In December 2025, voters in one California county approved a sales tax measure specifically designed to backfill the funding gaps created by the reconciliation law. Analysts expect other counties, particularly those with high concentrations of safety-net clinics and vulnerable populations, to consider similar ballot measures in late 2026 to protect local healthcare infrastructure from the anticipated federal cuts.

Broader Implications for Healthcare Access and Rural Stability

The introduction of work requirements carries profound implications for the stability of California’s healthcare system, particularly in rural and underserved areas. While the state received $233 million from the federal Rural Health Transformation Program in 2025, experts warn that this funding is unlikely to fully compensate for the long-term losses caused by the reconciliation law. Rural hospitals and clinics, which often operate on thin margins, are highly sensitive to fluctuations in Medicaid enrollment. A mass disenrollment of 1.4 million people could lead to a spike in uncompensated care, threatening the financial viability of these essential facilities.

Furthermore, the "voluntary" imposition of work requirements on state-funded programs for immigrants reflects a broader policy shift. By aligning state-funded programs with federal Medicaid requirements, California is attempting to create a unified administrative framework, but at the cost of increasing barriers to care for a population that already faces significant social and economic hurdles.

The success of California’s implementation will likely depend on the state’s ability to refine its data-matching systems. Currently, about 1.8 million individuals can be determined exempt or compliant through automated sources. Increasing this number through better cross-system data sharing will be essential to reducing the manual workload on state staff and minimizing procedural disenrollments.

As 2027 approaches, California serves as a primary case study for the nation. The state’s journey through budget deficits, technical system upgrades, and large-scale public outreach will provide critical data on whether Medicaid work requirements can be implemented without dismantling the healthcare safety net. For now, state officials remain focused on the dual challenge of balancing the books while ensuring that the millions of Californians who rely on Medi-Cal do not fall through the administrative cracks.